What does it cost to incorporate behaviorally informed interventions within government programs?

A recent review of behaviorally informed interventions OES designed between 2015 and 2018 found that the interventions had a small but significant impact. This exciting result left us wondering: what did it cost to deliver these interventions? And how do these costs help us interpret the impact findings? To supplement the large-scale review, OES analyzed the cost of delivering the interventions included in the review.

What did it cost to deliver these interventions? And how do these costs help us interpret the impact findings?

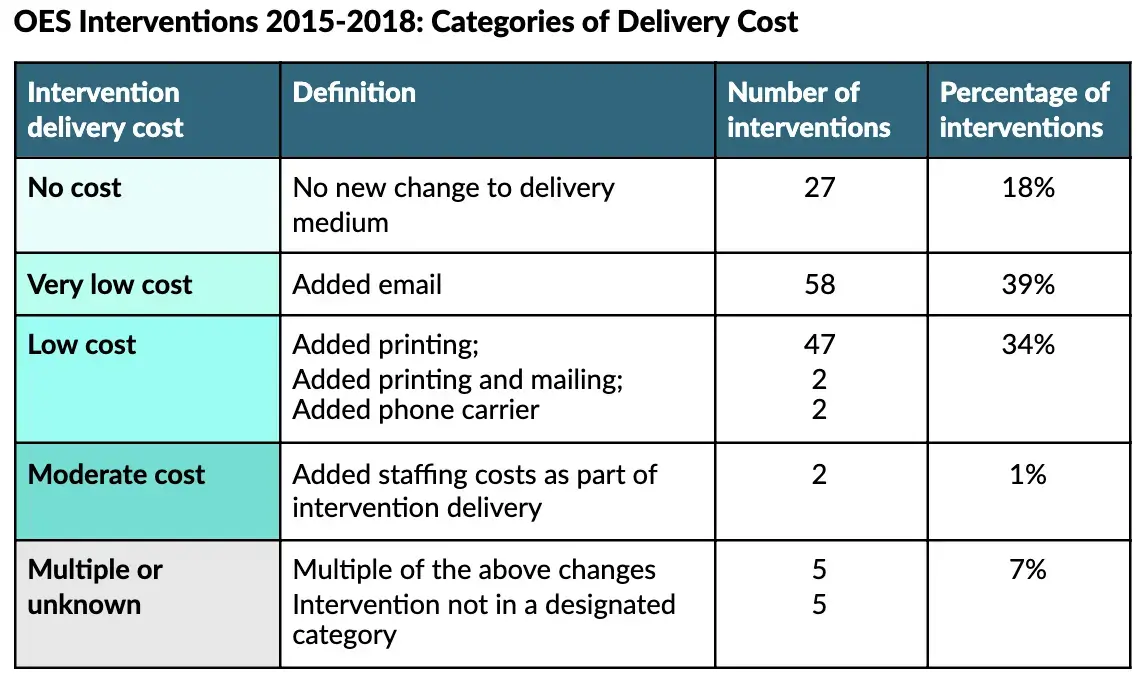

A vast majority of interventions — 92% — were of no, very low or low ongoing marginal cost to deliver. This assessment was based on the distribution approach for each intervention and assumptions about the relative cost of these various distribution types, as detailed below. Behavioral insights were most often applied through creation of a new message distribution; for example, a mailing campaign was added or a reminder text was introduced. While these interventions introduced variable ongoing costs, these were generally quite small.

One example of an opportunity for applying behavioral insights at no ongoing marginal cost is the introduction of prompts to guide users in an online system. In 2015, OES designed an electronic signature box for GSA’s online sales reporting portal, aimed at improving accuracy and reducing errors. As a result of this change — which incurred no ongoing cost for the agency — portal users more accurately reported sales; the median self-reported sales amount was $445 higher for vendors using the behaviorally informed signature prompt, translating into an extra $1.59 million paid to the government in one single quarter.

Paired with the impact findings — average improvement of 8.1% on key policy outcomes — this suggests that OES interventions between 2015-2018 generally yielded statistically significant improvements at no to low ongoing marginal cost. While OES did not conduct robust cost-effectiveness analyses, which would require detailed implementation and cost data, this review of interventions suggests a promising return on investment for agencies incorporating behavioral insights into their programs.

What’s next?

OES will continue to share what we learn in applying, testing, and costing behavioral interventions. In addition to generating insights, OES will support the utilization of such evidence across government collaborators. Moving forward, OES is building evidence on a broader range of programs and interventions across government. Recognizing more complex or expensive interventions can still be cost effective, OES aims to build evidence on impact and identify cost effective program improvements across government.